By: Serhii Yashchuk

5 APR 2021

318

A common practice in most IT companies is a situation where, while developing an application and sites, a development team is working on several projects at once. Sometimes the IT team has to accept an existing project for revision. To switch from one project to another, a team can face such inconvenience that different projects require a different development environment. And developers are forced to waste time applying the necessary adjustments.

It was like that before. Nowadays, many companies use Docker or Docker-Compose services. Our developers are among them.

What is Docker or Docker-Compose, how to install it, configure it, and even more so use it in your development? For beginners, this may seem like an obstacle, but it's still better to overcome it because sooner or later you will have to deal with it anyway.

To learn about Docker, first of all, you need to not only visit the site but also study the materials of the source, more precisely, its documentation. Quite a few sources are describing what Docker is for and how to work with it. I will try to convey detailed information, choosing the most relevant one at the moment. So let's get started!

Docker is an open platform for developing and running applications. It allows you to separate applications from the infrastructure that can be managed in the same way as you manage your applications. Using Docker methodologies for quick testing and deployment of code, you can significantly reduce the delay between writing code and running it in a production environment.

Docker Installation

First of all, Docker needs to be installed. The installation process may differ depending on the operating system. In this article, I will describe the installation process for Ubuntu 20.04.

You need to take the following steps:

1. Update the existing list of packages:

sudo apt update

2. Install several required packages that allow apt to use packages through HTTPS:

sudo apt install apt-transport-https ca-certificates curl software-properties-common

3. Add the GPG key for the Docker official repository to your system: curl -fsSL | sudo apt-key add -

4. Add the Docker repository to apt sources:

sudo add-apt-repository "deb [arch=amd64]

5. Then update the package database and add the Docker packages from the newly added repository to it:

sudo apt update

6. Make sure the installation will be done from the Docker repository, not the default Ubuntu repository:

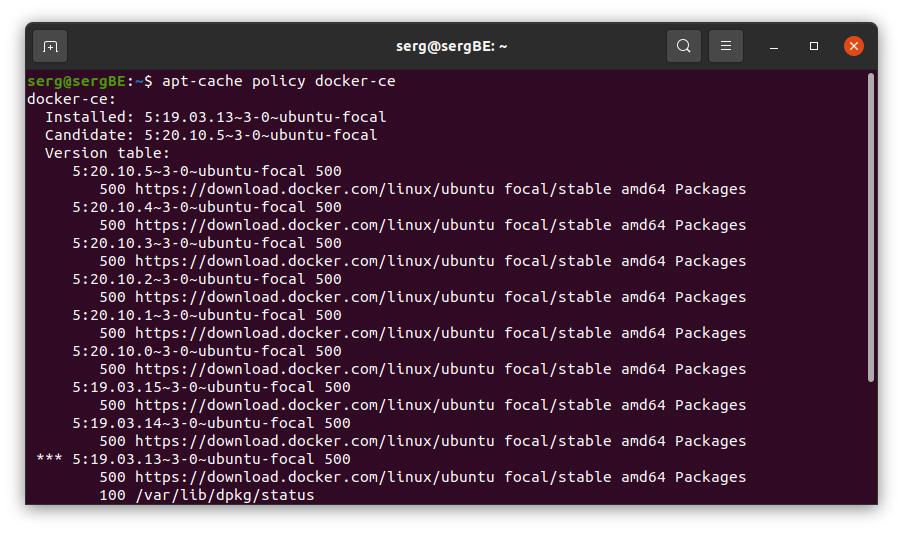

apt-cache policy docker-ce

Note that docker-ce is not installed, but is a candidate for installing from the Docker repository for Ubuntu 20.04 (focal version).

7. Install Docker:

sudo apt install docker-ce

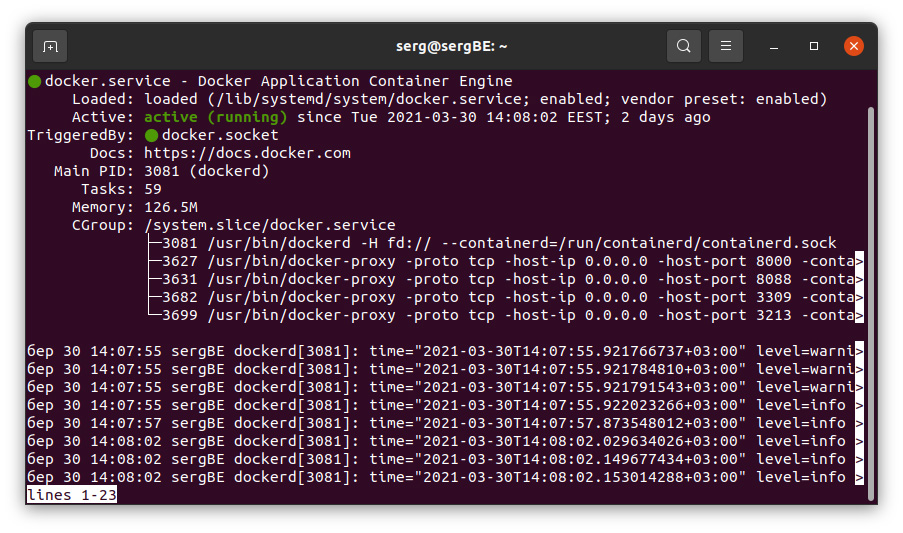

8. Docker must be installed, the daemon process is running, and the startup process at boot is activated. Check that the process is running:

sudo systemctl status docker

The output should look similar to the following, indicating that the service is active and running:

Once the Docker installation is complete, you will have access not only to the Docker service (daemon process) but also to the Docker command-line utility or the Docker client.

Using the Docker command

Using docker involves passing it a chain of options and commands, followed by arguments. The syntax is of the following form:

docker [option] [command] [arguments]

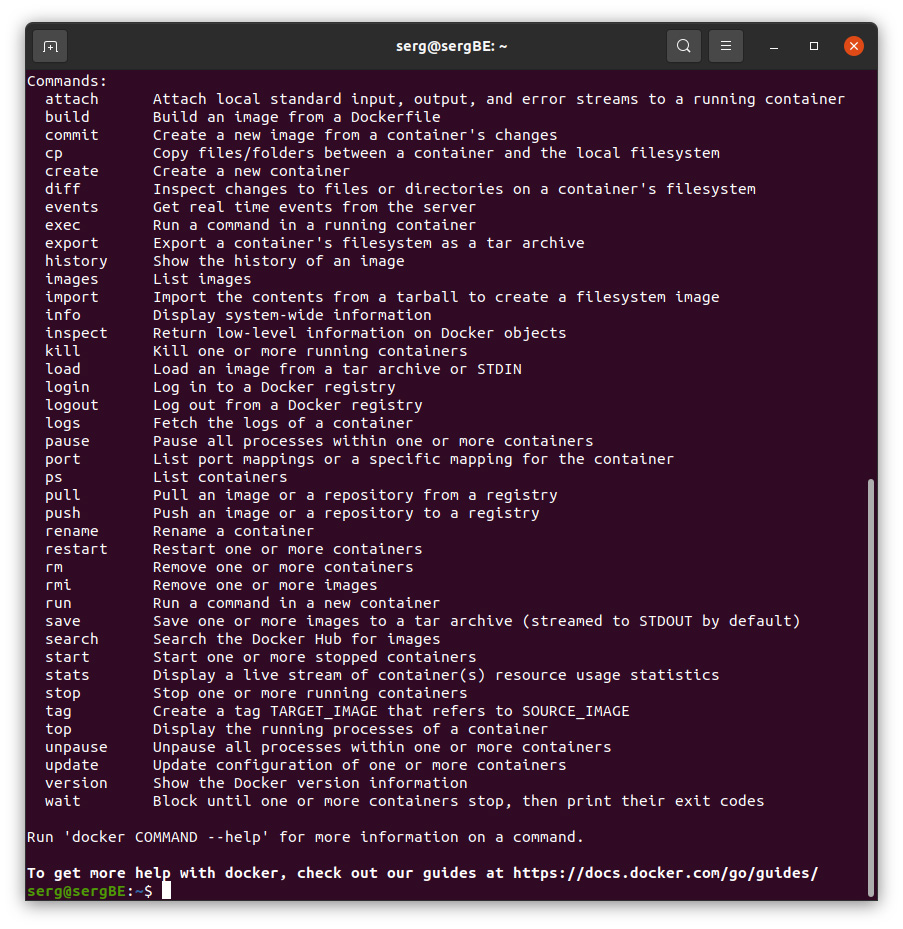

To view all available subcommands, type:

docker

The complete list of subcommands looks like this:

To view the options available for a particular command, type:

docker docker-subcommand —help

To view system-wide information on Docker, enter:

docker info

Working with Docker images

Docker containers are derived from Docker images. By default, it downloads these images from Docker Hub, a Docker registry controlled by the company that implements the Docker project. Anyone can host their Docker images on Docker Hub, so for most applications and Linux distros, you need to store images there.

To check whether or not you can access the images from Docker Hub and load them, enter the following command:

docker run hello-world

You can search for available images on Docker Hub using the docker command with the search subcommand. For example, to find an Ubuntu image, enter:

docker search ubuntu

The script will run through Docker Hub and return a list of all images with names that match the request.

To download the official ubuntu image to your computer, run the following command:

docker pull ubuntu

After the image is loaded, you can start the container from the loaded image using the run subcommand.

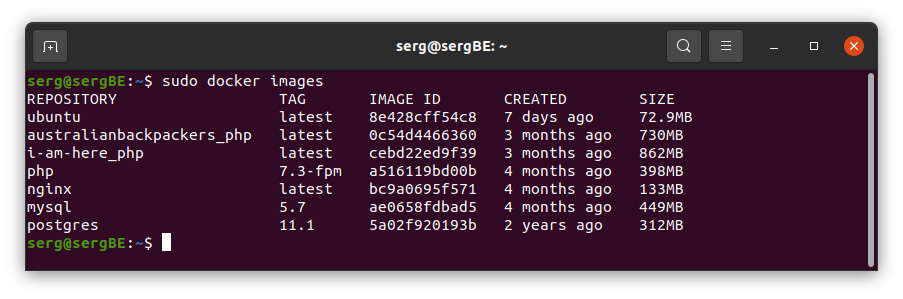

docker images

Running Docker containers

In general, containers are very similar to virtual machines, but they use resources more conservatively.

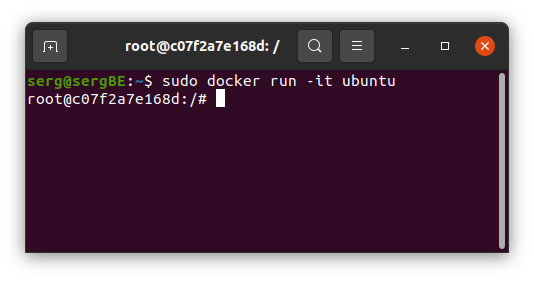

The combination of the -i and -t switches gives you access to an interactive command shell inside the container:

docker run -it ubuntu

To reflect the fact that you are working inside a container, you need to change the command prompt as follows:

Notice the container ID in the command request. In this example, this is d9b100f2f636. When you want to remove a container, you will need this ID to identify the container.

Now you can run any command inside the container. For example, we will now update the package database inside the container. You do not need to start any command with sudo, as you work inside the container listed as root-user:

apt update

Docker container management

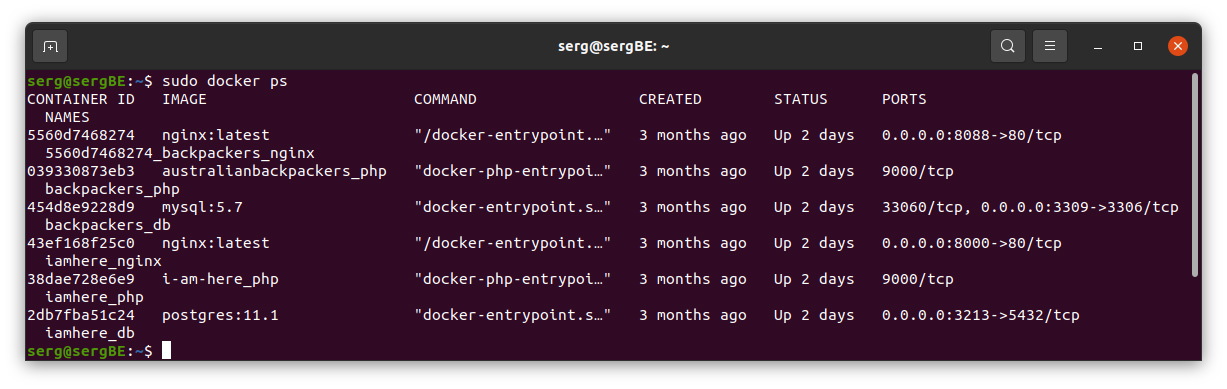

For some time after using Docker, you will have many active (running) and inactive containers on your computer. To view the active ones, use the following command:

docker ps

The output will look something like this:

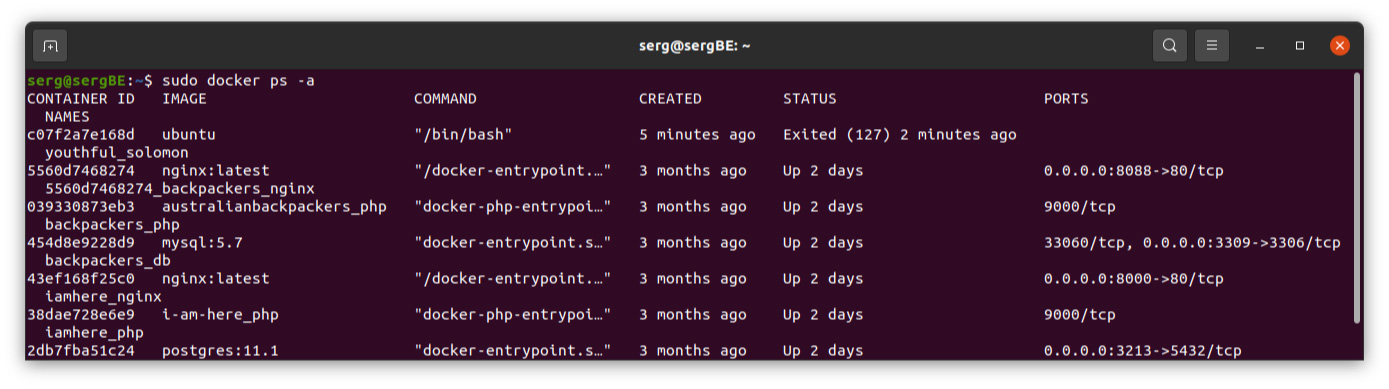

To view all containers, active and inactive, use the docker ps command with the -a switch:

The output will look like this:

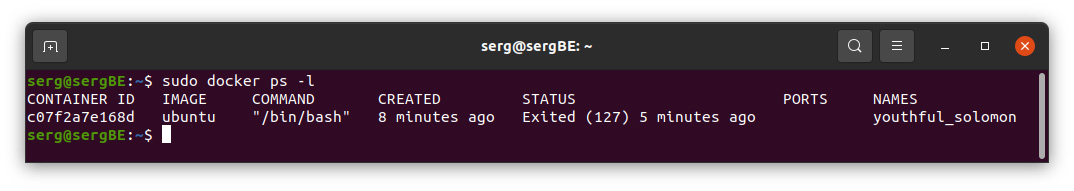

To view the last container you created, pass the -l switch:

docker ps -l

To start a stopped container, use Docker start with the container ID or container name. Let's start an Ubuntu-based container with ID c07f2a7e168d:

docker start c07f2a7e168d

The container will start, and you can use docker ps to check its status.

To stop a running container, use docker stop with the container ID or name.

docker stop name_container

Since you decide that you no longer need the container, use the docker rm command to remove it, adding the container ID or name again. Use the docker ps -a command to find the id or name of the container associated with the hello-world image and remove it.

docker rm name_container

We, the developers of Appus Studio, concluded that it is very beneficial to use Docker. This platform is undoubtedly useful and important. Especially in the case when developers are often forced to switch between different projects.

Yes, Docker requires knowledge and practical experience, but in the long term, it reduces the time to switch to another project.

Now let's look at the pros and cons of containerization.

PROS

1. Abstracting the application from the host

The main idea of the container is complete standardization. The container connects to the host with a specific interface. A container is a sandboxed application that is independent of the host architecture or resources. For the host, the container is a kind of "black box", no matter what is in it.

2. Scaling

Another benefit of abstracting the application from the host is the ability to scale easily and linearly. That is, several containers can be launched on one machine, at the same time they can also be launched on a test server. In a productive environment, they can also be scaled up.

3. Version and dependency management

Using containers, the developer binds all components and dependencies to the application. That allows you to work with them as a whole object. There is no need to install additional components or dependencies on the host to run the application that is inside the container. The ability to run a Docker container is enough.

4. Environment Isolation

Isolation in containers does not reach the same level as in virtualization. However, it has a lightweight execution environment and refers to process-level isolation. At the same time, the container runs on the same core. This allows it to launch very quickly. Running hundreds of containers on your computer will not be a problem.

5. Using layers

The lightness of containers lies in their layering. When multiple containers use the same layer, they can use it without duplication. This minimizes the use of disk space.

6. Сompilation

This is the ability to script specific actions to create a new image. Those, the ability to customize the environment. You can write code and store it in the version control system.

CONS:

1. Fine-tuning

With high scalability and load, a very clear and high-quality configuration of systems is required.

2. Backward compatibility

Docker is evolving rapidly, and one of the downsides of this rapid development is limited backward compatibility in some areas.

3. Deinstallation

The process of uninstalling Docker containers is time-consuming and requires a considerable number of input and output operations.

4. Productivity

Additional system add-ons in any case lead to an increase in load and resource consumption.

5. Architecture

Containerization is an OS add-on, which complicates the implementation of the task.

6. Support

To support and maintain Docker containers, it is necessary not only to have system administrator skills but also a deep knowledge of Docker.

So we've covered the use of Docker. In the next article, we'll consider using Docker-Compose and how they differ.

Services

Services

Work

Work

Company

Company

Blog

Blog

Contact

Contact